Built with Swift, SpriteKit, and an AI-assisted workflow (Claude + Codex)

This week I released a new game on the App Store: Alien Barrage. The project took about three months to build and ultimately grew into a roughly 20,000-line Swift/SpriteKit game.

While AI tools played a major role in accelerating development, the project still required significant engineering work: planning systems, designing gameplay, testing, debugging, integrating platform services, managing the AI workflow, and continuously refining the experience based on iteration and feedback.

The game itself was inspired by the classic arcade shooters I grew up playing. I combined elements I enjoyed from several games, added my own mechanics and pacing ideas, and let the gameplay evolve naturally throughout development. In many ways, Alien Barrage became both a technical experiment and a throwback to the arcade era.

Why I Chose Native iOS Development

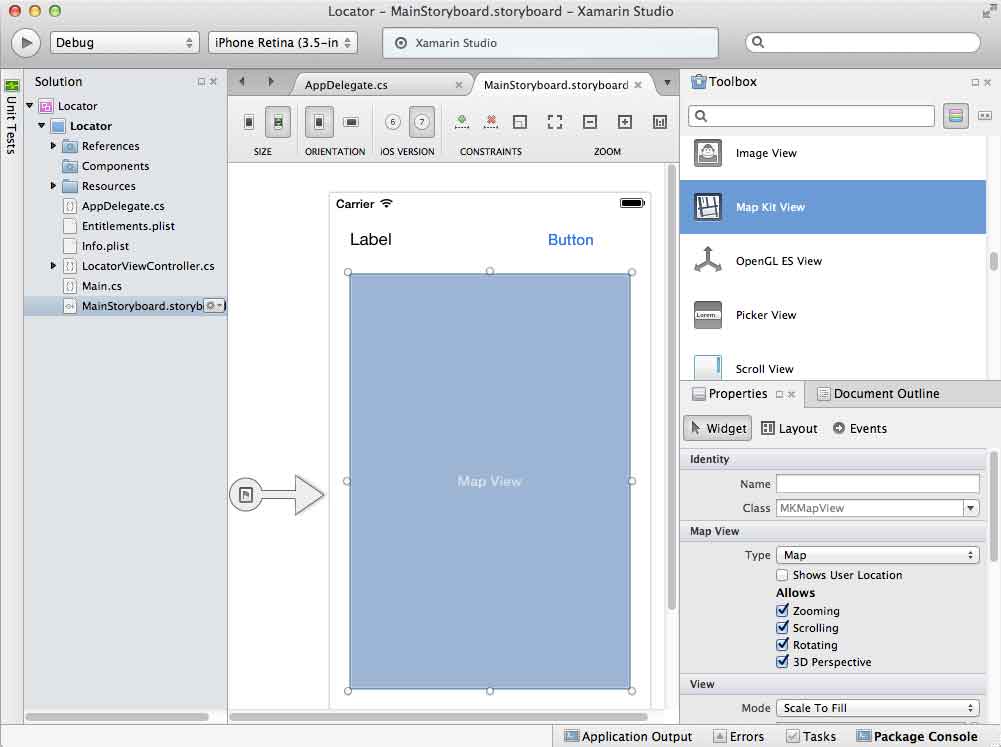

I considered building the game in Unity, but ultimately decided to use Apple’s SpriteKit framework and develop the project natively in Swift.

Part of that decision was practical: I wanted to build more directly against modern Apple-native frameworks and services, including:

- Swift and Xcode workflows

- SpriteKit

- Game Center leaderboards and achievements

- In-App Purchases

- Native App Store deployment

- Localization pipelines

The game and App Store content were ultimately translated into 14 languages.

Coming from years of cross-platform development using Xamarin, .NET MAUI, React Native, and Adobe AIR, I wanted to push further into modern native Apple development and build more directly against platform-native frameworks and tooling.

Development with AI

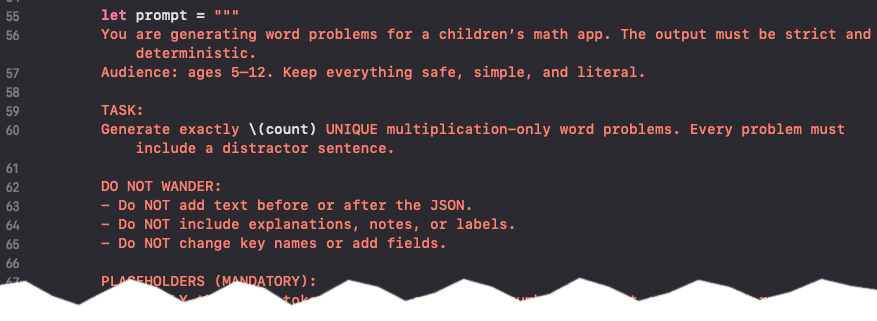

The project was developed using a custom AI-assisted workflow built primarily around Claude and Codex.

Rather than treating AI as a “one click app generator,” I approached it more like structured pair programming. I directed architecture, gameplay systems, feature planning, debugging, testing, iteration, and project organization, while AI accelerated implementation and repetitive development tasks.

One of the biggest lessons I learned was that workflow design matters just as much as prompting.

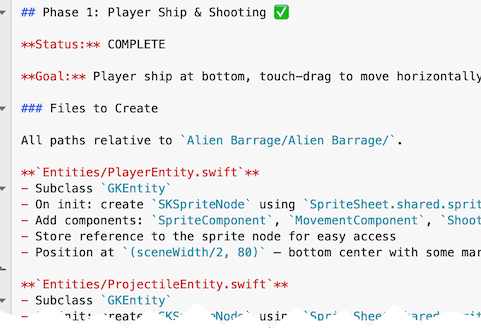

Phase-Based Development

Before writing production code, I used AI to help generate a full phase-based development outline for the game.

Each phase had:

- a clearly defined goal

- implementation scope

- testing criteria

- isolated Git branches

- completion checkpoints

The workflow looked something like this:

- Plan a phase

- Scope prompts tightly

- Let AI implement the feature

- Review and test manually

- Refine edge cases

- Merge the branch

- Move to the next phase

This created a surprisingly clean development history with structured progression and meaningful commit messages.

No production code was generated until the overall structure of the game had been planned first.

What Actually Worked

Model Switching (Claude ↔ Codex)

I frequently switched between Claude and Codex depending on context limits, reasoning quality, or implementation drift.

This ended up having several unexpected advantages:

- reduced long-context degradation

- lower overall cost

- forced re-grounding between phases

- improved planning discipline

Different models also had different strengths depending on the task.

Human Validation Loops

AI would implement a feature and then stop, often providing testing instructions or validation steps.

I reviewed, tested, and refined features continuously rather than allowing large unverified code changes to accumulate.

That tight feedback loop helped keep the project stable even as the scope grew.

Git Discipline

Each phase was isolated into its own Git branch before being merged back into the main project.

That structure made experimentation safer and kept development organized as the game evolved.

AI Beyond Coding

AI was used for more than gameplay implementation.

The workflow also included:

- asset generation

- image processing

- sound integration

- video generation

- documentation generation

- command-line automation

Tools like ImageMagick and ffmpeg were integrated into the workflow with AI assistance, alongside ChatGPT for image generation and other production tasks.

Me vs. Me + AI

Realistically, I probably would not have had the time to build a project of this size entirely on my own within a few months while balancing everything else.

What AI changed for me was not the need for engineering judgment—it changed the speed of execution.

The combination of:

- real-world software engineering experience

- mobile development background

- architecture planning

- debugging ability

- product direction

- and AI-assisted implementation

turned out to be extremely effective.

To me, the process felt less like “AI replacing programming” and more like advanced pair programming with a very fast collaborator.

Cross-Platform vs. Native Development

For years I leaned heavily into cross-platform development.

My background includes:

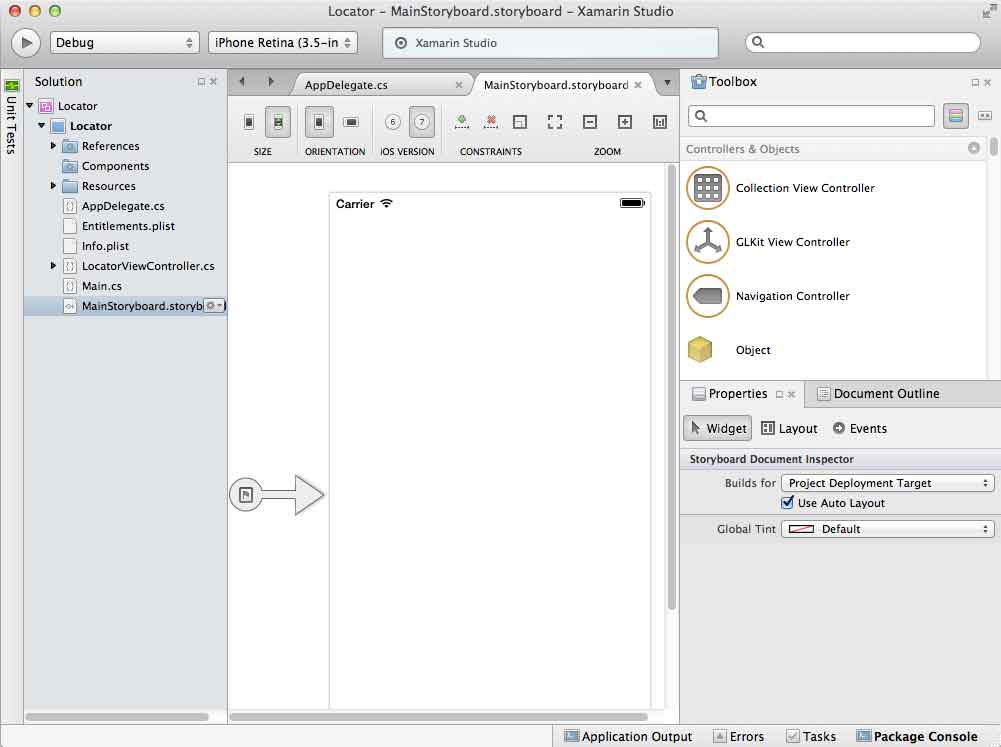

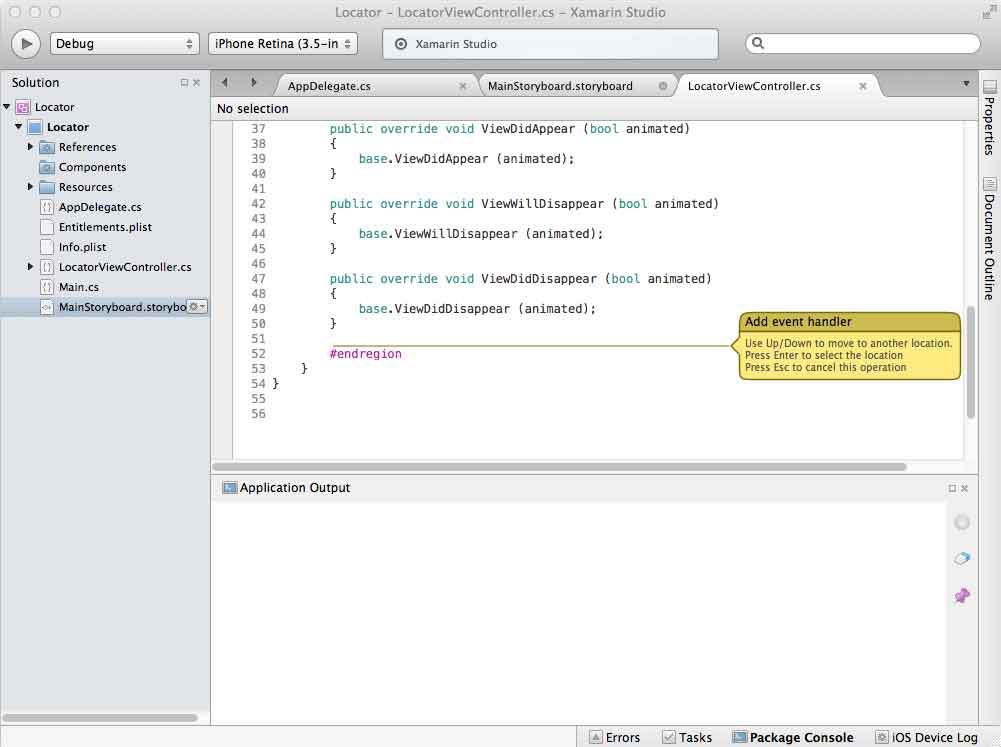

- Xamarin

- .NET MAUI

- React Native

- Adobe AIR

The biggest advantage was always efficiency: one codebase and one primary skill set for multiple platforms.

But AI-assisted development changes that equation somewhat.

Recently I’ve been focusing heavily on native apps in Swift and Kotlin while using AI-assisted workflows to accelerate implementation, experimentation, and iteration.

That has made native development significantly more appealing than it once was.

My AI Coding Journey

I started experimenting seriously with AI coding tools in 2025 using Codex and later Claude.

Since then, I’ve:

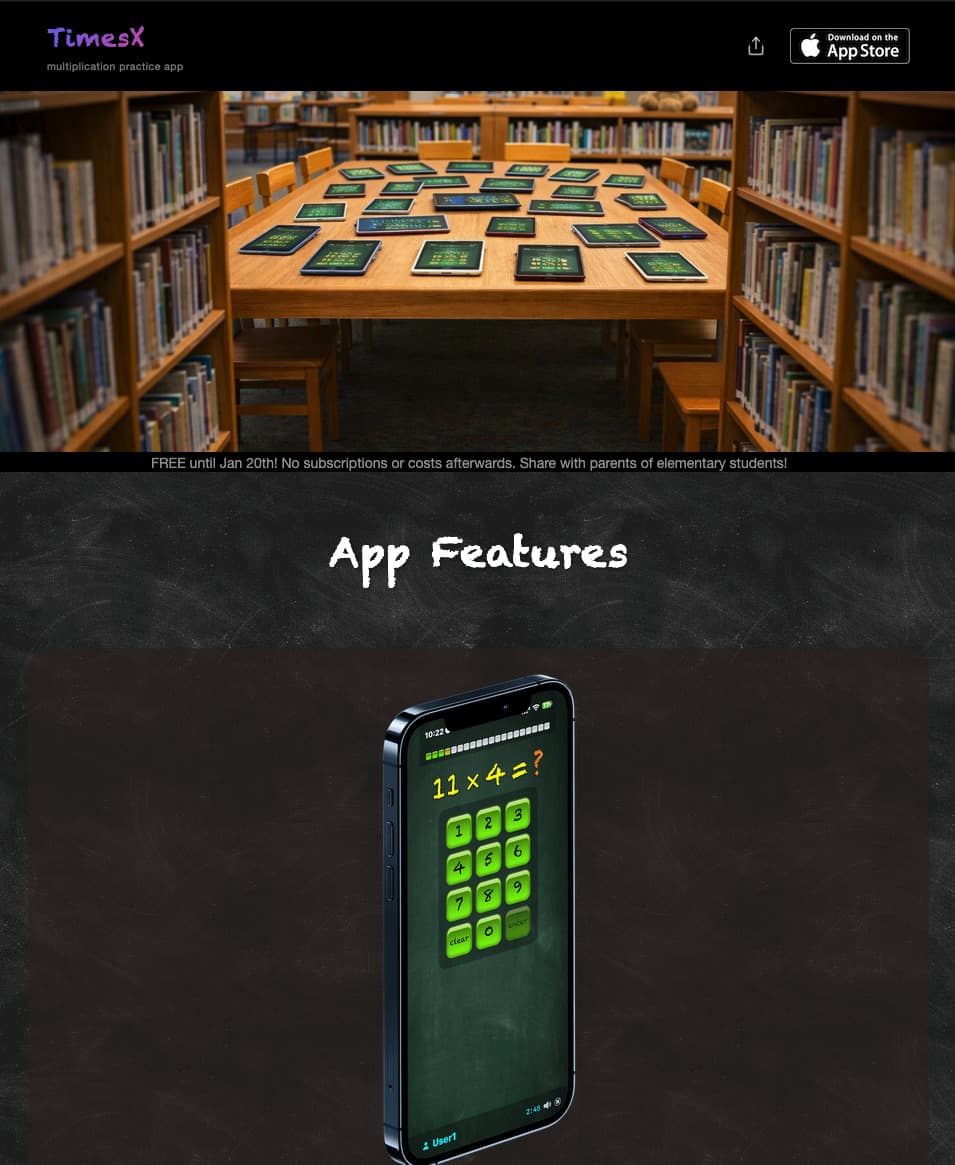

- rebuilt my Xamarin-based iOS app TimesX in native Swift

- integrated Apple Intelligence-powered content generation into TimesX

- replaced the original Xamarin version on the App Store

- created a native Android version in Kotlin optimized for Chromebooks

- built supporting websites and tooling

- developed Alien Barrage using Swift and SpriteKit

- experimented with Apple TV and Mac OS native applications for personal use

In just a few months, I’ve been able to build and ship substantially more software than I could previously as a solo developer.

I’ll admit it: I’m hooked on AI-accelerated development.

Not because it removes the need for engineering—but because it amplifies what experienced developers can accomplish.

Final Thoughts

One thing this project reinforced for me is that AI is most powerful when paired with real development experience.

Architecture decisions, debugging, testing, workflow design, platform knowledge, and product direction still matter enormously.

AI simply compresses the distance between idea and execution.

For experienced developers willing to adapt, that combination feels less like a threat and more like a significant advantage.